# 3D Generation

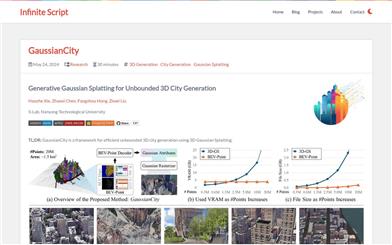

Gaussiancity

GaussianCity is a framework focused on efficiently generating boundless 3D cities, based on 3D Gaussian rendering technology. This technology, through compact 3D scene representation and a spatially aware Gaussian attribute decoder, solves the memory and computational bottlenecks encountered by traditional methods when generating large-scale city scenes. Its main advantage is the ability to quickly generate large-scale 3D cities in a single forward pass, significantly outperforming existing technologies. This product was developed by the S-Lab team at Nanyang Technological University, with the related paper published in CVPR 2025. The code and models have been open-sourced and are suitable for researchers and developers who need to efficiently generate 3D city environments.

3D Modeling

45.3K

Diffsplat

DiffSplat is an innovative 3D generation technology that quickly creates 3D Gaussian point clouds from text prompts and single-view images. This technology leverages a large-scale pre-trained text-to-image diffusion model to efficiently generate 3D content. It addresses the limitations of traditional 3D generation methods concerning dataset size and the ineffective use of 2D pre-trained models, while maintaining 3D consistency. Key advantages of DiffSplat include efficient generation speeds (completed in 1 to 2 seconds), high-quality 3D output, and support for various input conditions. The model has broad prospects in academic research and industrial applications, particularly in scenarios requiring the rapid generation of high-quality 3D models.

3D Modeling

50.2K

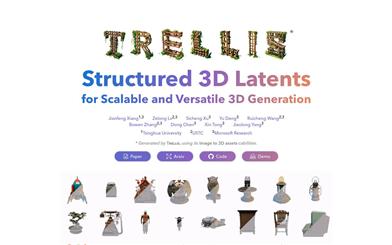

TRELLIS

TRELLIS is a native 3D generation model based on a unified structured latent representation and a correction transformer, capable of producing diverse and high-quality 3D assets. The model captures structural (geometric) and texture (appearance) information comprehensively by integrating sparse 3D meshes with dense multi-view visual features extracted from powerful visual foundation models, while maintaining flexibility during the decoding process. TRELLIS can handle up to 2 billion parameters and has been trained on a large dataset of 3D assets containing 500,000 diverse objects. It generates high-quality results conditioned on text or images, significantly surpassing existing methods, including recent approaches of similar scale. TRELLIS also demonstrates flexible output format options and local 3D editing capabilities, which were not provided by previous models. Source code, models, and data will be made available.

3D Modeling

88.0K

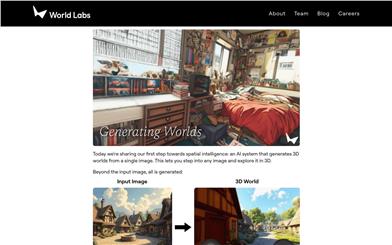

Generating Worlds

This AI system can create a 3D world from a single image, allowing users to immerse themselves in any picture for 3D exploration. This technology improves control and consistency, transforming the way we create films, games, simulators, and other digital expressions. It represents a significant step in spatial intelligence, enabling users to experience various camera effects and 3D effects while exploring classic artworks in real-time within their browser.

3D Modeling

49.4K

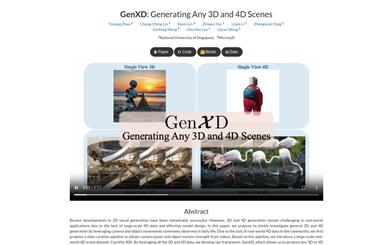

Genxd

GenXD is a framework focused on 3D and 4D scene generation, utilizing common camera and object motion found in everyday life to jointly study general 3D and 4D generation. Due to a lack of large-scale 4D data in the community, GenXD initially proposes a data planning process to extract camera poses and object motion intensity from videos. Based on this process, GenXD introduces a large-scale real-world 4D scene dataset: CamVid-30K. By leveraging all 3D and 4D data, the GenXD framework can generate any 3D or 4D scene. It offers a multi-view-time module that separates camera and object motion, learning seamlessly from 3D and 4D data. Furthermore, GenXD employs masked latent conditions to support various conditional views. GenXD can generate videos that follow camera trajectories and consistent 3D views that can be enhanced to 3D representations. It has undergone extensive evaluation across various real-world and synthetic datasets, demonstrating its effectiveness and versatility in 3D and 4D generation compared to previous methods.

3D Modeling

48.6K

Hunyuan3d 1

Hunyuan3D-1 is a unified framework introduced by Tencent for generating 3D models from text and images. The framework uses a two-stage approach: the first stage employs a multi-view diffusion model to quickly generate multi-view RGB images, while the second stage uses a feed-forward reconstruction model to swiftly construct 3D assets. Hunyuan3D-1.0 strikes an impressive balance between speed and quality, significantly reducing generation time while maintaining the quality and diversity of the generated assets.

3D Modeling

55.2K

Chinese Picks

Tencent Hunyuan 3D

Tencent Hunyuan 3D is an open-source 3D generation model designed to address the shortcomings in generation speed and generalization capabilities of existing 3D generation models. Utilizing a two-stage generation approach, the first stage rapidly generates multi-view images using a multi-view diffusion model, while the second stage quickly reconstructs 3D assets through a feed-forward reconstruction model. The Hunyuan 3D-1.0 model aids 3D creators and artists in automating the production of 3D assets, enabling quick single-image 3D generation, and completing end-to-end production—including mesh and texture extraction—within 10 seconds.

3D modeling

98.0K

Dreammesh4d

DreamMesh4D is a novel framework that combines mesh representation with sparse control deformation techniques to generate high-quality 4D objects from monocular videos. This technology addresses the challenges of spatial-temporal consistency and surface texture quality seen in traditional methods by integrating implicit neural radiance fields (NeRF) or explicit Gaussian drawing as underlying representations. Drawing inspiration from modern 3D animation workflows, DreamMesh4D binds Gaussian drawing to triangle mesh surfaces, enabling differentiable optimization of textures and mesh vertices. The framework starts with a rough mesh provided by single-image 3D generation methods and constructs a deformation graph by uniformly sampling sparse points to enhance computational efficiency while providing additional constraints. Through two-stage learning, it leverages reference view photometric loss, score distillation loss, and other regularization losses to effectively learn static surface Gaussians, mesh vertices, and dynamic deformation networks. DreamMesh4D outperforms previous video-to-4D generation methods in rendering quality and spatial-temporal consistency, and its mesh-based representation is compatible with modern geometric processes, showcasing its potential in the 3D gaming and film industries.

AI video generation

49.7K

Phidias

Phidias is an innovative generative model that utilizes diffusion technology for reference-enhanced 3D generation. This model generates high-quality 3D assets from images, text, or 3D conditions and can complete the process in seconds. It significantly improves generation quality, generalization capability, and controllability through the integration of three key components: a Meta-ControlNet that dynamically adjusts condition strength, dynamic reference routing, and self-reference enhancement. Phidias provides a unified framework for 3D generation using text, images, and 3D conditions, with a variety of application scenarios.

AI image generation

47.5K

Vfusion3d

VFusion3D is a scalable 3D generation model built on a pre-trained video diffusion model. It addresses the challenges of acquiring 3D data and its limited availability by fine-tuning the video diffusion model to generate a large-scale synthetic multi-view dataset, training a feedforward 3D generation model that can quickly create 3D assets from a single image. The model has excelled in user studies, with over 90% of users preferring VFusion3D's generated results.

AI image generation

51.9K

Ouroboros3d

Ouroboros3D is a unified 3D generation framework that integrates multi-view image generation and 3D reconstruction into a single recursive diffusion process. The framework jointly trains the two modules via a self-supervised mechanism, enabling them to adapt to each other and achieve robust inference. During multi-view denoising, the multi-view diffusion model utilizes 3D-aware rendered images from the reconstruction module at the previous timestep as additional conditioning. The combination of the recursive diffusion framework with 3D-aware feedback improves the overall geometric consistency of the process. Experiments demonstrate that the Ouroboros3D framework outperforms both separate training of the two stages and existing methods that combine them at inference time.

AI image generation

63.8K

Interactive3d

Interactive3D is an advanced 3D generation model that provides users with precise control capabilities through interactive design. The model utilizes a two-stage cascading structure, employing different 3D representation methods, allowing users to modify and guide at any intermediate step of the generation process. Its significance lies in the ability to achieve fine control over the 3D model generation process, thereby creating high-quality 3D models that meet specific requirements.

AI 3D tools

48.6K

GRM

GRM is a large-scale reconstruction model that can recover 3D assets from sparse view images in 0.1 seconds and achieve generation in 8 seconds. It is a feed-forward Transformer-based model that can efficiently fuse multi-view information to convert input pixels into pixel-aligned Gaussian distributions. These Gaussian distributions can be back-projected into a dense 3D Gaussian distribution collection representing the scene. Our Transformer architecture and the use of 3D Gaussian distributions unlock a scalable and efficient reconstruction framework. Extensive experimental results demonstrate that our method surpasses other alternatives in terms of reconstruction quality and efficiency. We also showcase GRM's potential in generation tasks (such as text-to-3D and image-to-3D) by combining it with existing multi-view diffusion models.

AI image generation

58.8K

Stable Video 3D

Developed by Stability AI, Stable Video 3D represents a significant advancement in 3D technology. It offers substantial quality improvements and multi-view support compared to its predecessor, Stable Zero123. This model can generate track videos based on a single input image without any camera data and create 3D videos along specified camera paths.

AI video generation

151.2K

LGM

LGM is a novel framework for generating high-resolution 3D models from textual prompts or single-view images. Its key insights include: (1) 3D Representation: We propose a multi-view Gaussian feature as an efficient yet powerful representation that can be fused for differentiable rendering. (2) 3D Backbone: We present an asymmetric U-Net as a high-throughput backbone operation for multi-view images, which can be utilized to generate from text or single-view image inputs using multi-view diffusion models. Extensive experiments demonstrate the high fidelity and efficiency of our method. Notably, we achieve high-resolution 3D content generation while maintaining fast rendering speed for 3D objects, even when training resolution is increased to 512x512.

3D Modeling

73.1K

Hexagen3d

HexaGen3D is an innovative approach to generating high-quality 3D assets from text prompts. It leverages a large pre-trained 2D diffusion model, fine-tuned from a pre-trained text-to-image model, to jointly predict six orthogonal projections and corresponding latent trimeshes. These latent values are then decoded to generate textured meshes. HexaGen3D does not require optimization for each sample and can infer high-quality, diverse objects within 7 seconds from text prompts, providing a better balance of quality and latency compared to existing methods. Additionally, HexaGen3D demonstrates strong generalization capabilities for novel objects or combinations.

AI 3D tools

49.1K

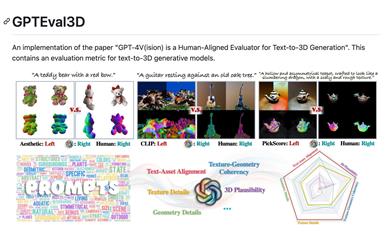

Gpteval3d

GPTEval3D is an open-source tool for evaluating 3D generation models. Based on GPT-4V, it enables automatic evaluation of text-to-3D generation models. It can calculate the ELO score of the generated models and compare them with existing models for ranking. This user-friendly tool supports custom evaluation datasets, allowing users to fully leverage the evaluation capabilities of GPT-4V. It serves as a powerful tool for researching 3D generation tasks.

AI Model Evaluation

75.1K

Featured AI Tools

Flow AI

Flow is an AI-driven movie-making tool designed for creators, utilizing Google DeepMind's advanced models to allow users to easily create excellent movie clips, scenes, and stories. The tool provides a seamless creative experience, supporting user-defined assets or generating content within Flow. In terms of pricing, the Google AI Pro and Google AI Ultra plans offer different functionalities suitable for various user needs.

Video Production

42.8K

Nocode

NoCode is a platform that requires no programming experience, allowing users to quickly generate applications by describing their ideas in natural language, aiming to lower development barriers so more people can realize their ideas. The platform provides real-time previews and one-click deployment features, making it very suitable for non-technical users to turn their ideas into reality.

Development Platform

44.7K

Listenhub

ListenHub is a lightweight AI podcast generation tool that supports both Chinese and English. Based on cutting-edge AI technology, it can quickly generate podcast content of interest to users. Its main advantages include natural dialogue and ultra-realistic voice effects, allowing users to enjoy high-quality auditory experiences anytime and anywhere. ListenHub not only improves the speed of content generation but also offers compatibility with mobile devices, making it convenient for users to use in different settings. The product is positioned as an efficient information acquisition tool, suitable for the needs of a wide range of listeners.

AI

42.5K

Minimax Agent

MiniMax Agent is an intelligent AI companion that adopts the latest multimodal technology. The MCP multi-agent collaboration enables AI teams to efficiently solve complex problems. It provides features such as instant answers, visual analysis, and voice interaction, which can increase productivity by 10 times.

Multimodal technology

43.1K

Chinese Picks

Tencent Hunyuan Image 2.0

Tencent Hunyuan Image 2.0 is Tencent's latest released AI image generation model, significantly improving generation speed and image quality. With a super-high compression ratio codec and new diffusion architecture, image generation speed can reach milliseconds, avoiding the waiting time of traditional generation. At the same time, the model improves the realism and detail representation of images through the combination of reinforcement learning algorithms and human aesthetic knowledge, suitable for professional users such as designers and creators.

Image Generation

42.2K

Openmemory MCP

OpenMemory is an open-source personal memory layer that provides private, portable memory management for large language models (LLMs). It ensures users have full control over their data, maintaining its security when building AI applications. This project supports Docker, Python, and Node.js, making it suitable for developers seeking personalized AI experiences. OpenMemory is particularly suited for users who wish to use AI without revealing personal information.

open source

42.8K

Fastvlm

FastVLM is an efficient visual encoding model designed specifically for visual language models. It uses the innovative FastViTHD hybrid visual encoder to reduce the time required for encoding high-resolution images and the number of output tokens, resulting in excellent performance in both speed and accuracy. FastVLM is primarily positioned to provide developers with powerful visual language processing capabilities, applicable to various scenarios, particularly performing excellently on mobile devices that require rapid response.

Image Processing

41.4K

Chinese Picks

Liblibai

LiblibAI is a leading Chinese AI creative platform offering powerful AI creative tools to help creators bring their imagination to life. The platform provides a vast library of free AI creative models, allowing users to search and utilize these models for image, text, and audio creations. Users can also train their own AI models on the platform. Focused on the diverse needs of creators, LiblibAI is committed to creating inclusive conditions and serving the creative industry, ensuring that everyone can enjoy the joy of creation.

AI Model

6.9M